Data Containers: Data sets and Meta data

Rationale

Data products produced by data processing procedures are meant to be read, underatood, and used by others. Many people tend to store data with no note of meaning attached to those data. Without attached explanation, it is difficult for other people to fully understand or use correctly a collection of numbers. It could be difficult for even the data producer to recall the exact meaning of the numbers after a while. When someone receives a data ‘product’, besides dataets, one would expect explanation informaion associated with the product.

FDI implements a data product container scheme so that not only description and other metadata (data about data) are always attached to the “payload” data, but also that your data can have its context data attached as light weight references. One can organize scalar, vector, array, table types of data in the form of sequences, mappings, with nesting and referencing.

FDI is meant to be a small open-source package. Data stored in FDI ojects are easily accessible with Python API and are exported (serialized and stored by default) in cross-platform, human-readable JSON format. There are heavier formats (e..g. HDF5) and packages (e.g. iRODS) for similar goals. FDI’s data model was originally inspired by Herschel Common Software System (v15) products, taking other requirements of scientific observation and data processing into account. The APIs are kept as compatible with HCSS (written in Java, and in Jython for scripting) as possible.

Data Containers

Dataset

Three types of datasets are implemented to store potentially any hierarchical data as a dataset. Like a product, all datasets may have meta data, with the distinction that the meta data of a dataset is related to that particular dataset only.

- array dataset:

a dataset containing array data (say a data vector, array, cube etc…) and may have a unit and a typecode for efficient storing.

Examples (from Quick Start page):

>>> # Creation with an array of data quickly

... a1 = [1, 4.4, 5.4E3, -22, 0xa2]

... v = ArrayDataset(a1)

... # Show it. This is the same as print(v) in a non-interactive environment.

... # "Default Meta." means the metadata settings are all default values..

... v

ArrayDataset(shape=(5,). data= [1, 4.4, 5400.0, -22, 162])

>>> # Create an ArrayDataset with some built-in properties set.

... v = ArrayDataset(data=a1, unit='ev', description='5 elements', typecode='f')

... #

... # add some metadats (see more about meta data below)

... v.meta['greeting'] = StringParameter('Hi there.')

... v.meta['year'] = NumericParameter(2020)

... v

ArrayDataset(shape=(5,), description=5 elements, unit=ev, typecode=f, greeting=Hi there., year=2020. data= [1, 4.4, 5400.0, -22, 162])

>>> # data access: read the 2nd array element

... v[2] # 5400

5400.0

>>> # built-in properties

... v.unit

'ev'

>>> # change it

... v.unit = 'm'

... v.unit

'm'

>>> # iteration

... for m in v:

... print(m + 1)

2

5.4

5401.0

-21

163

>>> # a filter example

... [m**3 for m in v if m > 0 and m < 40]

[1, 85.18400000000003]

>>> # slice the ArrayDataset and only get part of its data

... v[2:-1]

[5400.0, -22]

>>> # set data to be a 2D array

... v.data = [[1, 2, 3], [4, 5, 6], [7, 8, 9]]

... # slicing happens on the slowest dimension.

... v[0:2]

[[1, 2, 3], [4, 5, 6]]

>>> # Run this to see a demo of the ``toString()`` function:

... # make a 4-D array: a list of 2 lists of 3 lists of 4 lists of 5 elements.

... s = [[[[i + j + k + l for i in range(5)] for j in range(4)]

... for k in range(3)] for l in range(2)]

... v.data = s

... print(v.toString())

=== ArrayDataset (5 elements) ===

meta= {

=========== ============ ====== ======= ======= ========= ====== =====================

name value unit type valid default code description

=========== ============ ====== ======= ======= ========= ====== =====================

shape (2, 3, 4, 5) tuple None () Number of elements in

each dimension. Quic

k changers to the rig

ht.

description 5 elements string None UNKNOWN B Description of this d

ataset

unit m string None None B Unit of every element

.

typecode f string None UNKNOWN B Python internal stora

ge code.

version 0.1 string None 0.1 B Version of dataset

FORMATV 1.6.0.1 string None 1.6.0.1 B Version of dataset sc

hema and revision

greeting Hi there. string None B UNKNOWN

year 2020 None integer None None None UNKNOWN

=========== ============ ====== ======= ======= ========= ====== =====================

MetaData-listeners = ListnerSet{}}

ArrayDataset-dataset =

0 1 2 3 4

1 2 3 4 5

2 3 4 5 6

3 4 5 6 7

1 2 3 4 5

2 3 4 5 6

3 4 5 6 7

4 5 6 7 8

2 3 4 5 6

3 4 5 6 7

4 5 6 7 8

5 6 7 8 9

#=== dimension 4

1 2 3 4 5

2 3 4 5 6

3 4 5 6 7

4 5 6 7 8

2 3 4 5 6

3 4 5 6 7

4 5 6 7 8

5 6 7 8 9

3 4 5 6 7

4 5 6 7 8

5 6 7 8 9

6 7 8 9 10

#=== dimension 4

- table dataset:

a dataset containing a collection of columns with column header as the key. Each column contains array dataset. All columns have the same number of rows.

Examples (from Quick Start page):

TableDataset is mainly a dictionary containing named Columns and their metadata. Columns are basically ArrayDatasets under a different name.

>>> # Create an empty TableDataset then add columns one by one

... v = TableDataset()

... v['col1'] = Column(data=[1, 4.4, 5.4E3], unit='eV')

... v['col2'] = Column(data=[0, 43.2, 2E3], unit='cnt')

... v

TableDataset(Default Meta.data= {"col1": Column(shape=(3,), unit=eV. data= [1, 4.4, 5400.0]), "col2": Column(shape=(3,), unit=cnt. data= [0, 43.2, 2000.0])})

>>> # Do it with another syntax, with a list of tuples and no Column()

... a1 = [('col1', [1, 4.4, 5.4E3], 'eV'),

... ('col2', [0, 43.2, 2E3], 'cnt')]

... v1 = TableDataset(data=a1)

... v == v1

True

>>> # Make a quick tabledataset -- data are list of lists without names or units

... a5 = [[1, 4.4, 5.4E3], [0, 43.2, 2E3], [True, True, False], ['A', 'BB', 'CCC']]

... v5 = TableDataset(data=a5)

... print(v5.toString())

=== TableDataset (UNKNOWN) ===

meta= {

=========== ======= ====== ====== ======= ========= ====== =====================

name value unit type valid default code description

=========== ======= ====== ====== ======= ========= ====== =====================

description UNKNOWN string None UNKNOWN B Description of this d

ataset

version 0.1 string None 0.1 B Version of dataset

FORMATV 1.6.0.1 string None 1.6.0.1 B Version of dataset sc

hema and revision

=========== ======= ====== ====== ======= ========= ====== =====================

MetaData-listeners = ListnerSet{}}

TableDataset-dataset =

column1 column2 column3 column4

(None) (None) (None) (None)

--------- --------- --------- ---------

1 0 True A

4.4 43.2 True BB

5400 2000 False CCC

>>> # access

... # get names of all columns (automatically given here)

... v5.getColumnNames()

['column1', 'column2', 'column3', 'column4']

>>> # get column by name

... my_column = v5['column1'] # [1, 4.4, 5.4E3]

... my_column.data

[1, 4.4, 5400.0]

>>> # by index

... v5[0].data # [1, 4.4, 5.4E3]

[1, 4.4, 5400.0]

>>> # get a list of all columns' data.

... # Note the slice "v5[:]" and syntax ``in``

... [c.data for c in v5[:]] # == a5

[[1, 4.4, 5400.0], [0, 43.2, 2000.0], [True, True, False], ['A', 'BB', 'CCC']]

>>> # indexOf by name

... v5.indexOf('column1') # == u.indexOf(my_column)

0

>>> # indexOf by column object

... v5.indexOf(my_column) # 0

0

>>> # set cell value

... v5['column2'][1] = 123

... v5['column2'][1] # 123

123

>>> # row access bu row index -- multiple and in custom order

... v5.getRow([2, 1]) # [(5400.0, 2000.0, False, 'CCC'), (4.4, 123, True, 'BB')]

[(5400.0, 2000.0, False, 'CCC'), (4.4, 123, True, 'BB')]

>>> # or with a slice

... v5.getRow(slice(0, -1))

[(1, 0, True, 'A'), (4.4, 123, True, 'BB')]

>>> # unit access

... v1['col1'].unit # == 'eV'

'eV'

>>> # add, set, and replace columns and rows

... # column set / get

... u = TableDataset()

... c1 = Column([1, 4], 'sec')

... # add

... u.addColumn('time', c1)

... u.columnCount # 1

1

>>> # for non-existing names set is addColum.

... u['money'] = Column([2, 3], 'eu')

... u['money'][0] # 2

... # column increases

... u.columnCount # 2

2

>>> # addRow

... u.rowCount # 2

2

>>> u.addRow({'money': 4.4, 'time': 3.3})

... u.rowCount # 3

3

>>> # run this to see ``toString()``

... ELECTRON_VOLTS = 'eV'

... SECONDS = 'sec'

... t = [x * 1.0 for x in range(8)]

... e = [2.5 * x + 100 for x in t]

... d = [765 * x - 500 for x in t]

... # creating a table dataset to hold the quantified data

... x = TableDataset(description="Example table")

... x["Time"] = Column(data=t, unit=SECONDS)

... x["Energy"] = Column(data=e, unit=ELECTRON_VOLTS)

... x["Distance"] = Column(data=d, unit='m')

... # metadata is optional

... x.meta['temp'] = NumericParameter(42.6, description='Ambient', unit='C')

... print(x.toString())

=== TableDataset (Example table) ===

meta= {

=========== ============= ====== ====== ======= ========= ====== =====================

name value unit type valid default code description

=========== ============= ====== ====== ======= ========= ====== =====================

description Example table string None UNKNOWN B Description of this d

ataset

version 0.1 string None 0.1 B Version of dataset

FORMATV 1.6.0.1 string None 1.6.0.1 B Version of dataset sc

hema and revision

temp 42.6 C float None None None Ambient

=========== ============= ====== ====== ======= ========= ====== =====================

MetaData-listeners = ListnerSet{}}

TableDataset-dataset =

Time Energy Distance

(sec) (eV) (m)

------- -------- ----------

0 100 -500

1 102.5 265

2 105 1030

3 107.5 1795

4 110 2560

5 112.5 3325

6 115 4090

7 117.5 4855

- composite dataset:

a dataset containing a collection of datasets. This allows arbitrary complex structures, as a child dataset within a composite dataset may be a composite dataset itself and so on…

Meta data and Parameters

FDI datasets and products not only contain data, but also their metadata – data about the “payload” data. Metadata is defined as a collection of named Parameters.

Often a parameter shows a property. So a parameter in the metadata of a dataset or product is often called a property.

- Parameter:

- scalar or vector variable with attributes.

There are the following parameter types:

Parameter: Types are defined in

metadata.ParameterTypes. If requested, a Parameter can check its value or a given value with the validity specification, which can be a combination of descrete values, ranges, and bit-masked values.NumericParameter

DateParameter

StringParameter

Parameter class |

parameter value |

parameter attributes |

Parameter |

typed objects |

description, type, validity descriptor, and default value |

NumericParameter |

a number (scalar), a

|

all above plus a unit and a typecode |

DateParameter |

|

Same as Parameter, type is ‘finetime’, Python :attribute:`datetime.format` string as the default typecode. |

StringParameter |

|

Same as Parameter, type is ‘string’, ‘B’ (for byte unsigned) as the default typecode |

Examples (from Quick Start page):

>>> # Creation

... # The standard way -- with keyword arguments

... v = Parameter(value=9000, description='Average age', typ_='integer')

... v.description # 'Average age'

'Average age'

>>> v.value # == 9000

9000

>>> v.type # == 'integer'

'integer'

>>> # test equals.

... # FDI DeepEqual integerface class recursively compares all components.

... v1 = Parameter(description='Average age', value=9000, typ_='integer')

... v.equals(v1)

True

>>> # more readable 'equals' syntax

... v == v1

True

>>> # make them not equal.

... v1.value = -4

... v.equals(v1) # False

False

>>> # math syntax

... v != v1 # True

True

>>> # NumericParameter with two valid values and a valid range.

... v = NumericParameter(value=9000, valid={

... 0: 'OK1', 1: 'OK2', (100, 9900): 'Go!'})

... # There are thee valid conditions

... v

NumericParameter(description="UNKNOWN", type="integer", default=None, value=9000, valid=[[0, 'OK1'], [1, 'OK2'], [[100, 9900], 'Go!']], unit=None, typecode=None, _STID="NumericParameter")

>>> # The current value is valid

... v.isValid()

True

>>> # check if other values are valid according to specification of this parameter

... v.validate(600) # valid

(600, 'Go!')

>>> v.validate(20) # invalid

(Invalid, 'Invalid')

- Metadata:

class manages parameters for datasets and products.

Examples (from Quick Start page):

A Metadata instance is mainly a dict-like container for named parameters.

>>> # Creation. Start with numeric parameter.

... a1 = 'weight'

... a2 = NumericParameter(description='How heavey is the robot.',

... value=60, unit='kg', typ_='float')

... # make an empty MetaData instance.

... v = MetaData()

... # place the parameter with a name

... v.set(a1, a2)

... # get the parameter with the name.

... v.get(a1) # == a2

NumericParameter(description="How heavey is the robot.", type="float", default=None, value=60.0, valid=None, unit="kg", typecode=None, _STID="NumericParameter")

>>> # add more parameter. Try a string type.

... v.set(name='job', newParameter=StringParameter('pilot'))

... # get the value of the parameter

... v.get('job').value # == 'pilot'

'pilot'

>>> # access parameters in metadata

... # a more readable way to set/get a parameter than "v.set(a1,a2)", "v.get(a1)"

... v['job'] = StringParameter('waitress')

... v['job'] # == waitress

StringParameter(description="UNKNOWN", default="", value="waitress", valid=None, typecode="B", _STID="StringParameter")

>>> # same result as...

... v.get('job')

StringParameter(description="UNKNOWN", default="", value="waitress", valid=None, typecode="B", _STID="StringParameter")

>>> # Date type parameter use International Atomic Time (TAI) to keep time,

... # in 1-microsecond precission

... v['birthday'] = Parameter(description='was born on',

... value=FineTime('1990-09-09T12:34:56.789098 UTC'))

... # FDI use International Atomic Time (TAI) internally to record time.

... # The format is the integer number of microseconds since 1958-01-01 00:00:00 UTC.

... v['birthday'].value.tai

Time zone stripped for 1990-09-09T12:34:56.789098 UTC according to format.

1031574921789098

>>> # names of all parameters

... [n for n in v] # == ['weight', 'job', 'birthday']

['weight', 'job', 'birthday']

>>> # remove parameter from metadata. # function inherited from Composite class.

... v.remove(a1)

... v.size() # == 2

2

>>> # The value of the next parameter is valid from 0 to 31 and can be 9

... valid_rule = {(0, 31): 'valid', 99: ''}

... v['a'] = NumericParameter(

... 3.4, 'rule name, if is "valid", "", or "default", is ommited in value string.', 'float', 2., valid=valid_rule)

... v['a'].isValid() # True

True

>>> then = datetime(

... 2019, 2, 19, 1, 2, 3, 456789, tzinfo=timezone.utc)

... # The value of the next parameter is valid from TAI=0 to 9876543210123456

... valid_rule = {(0, 9876543210123456): 'alive'}

... v['b'] = DateParameter(FineTime(then), 'date param', default=99,

... valid=valid_rule)

... # display format set to 'year' (%Y)

... v['b'].format = '%Y-%M'

... # The value of the next parameter has an empty rule set and is always valid.

... v['c'] = StringParameter(

... 'Right', 'str parameter. but only "" is allowed.', valid={'': 'empty'}, default='cliche', typecode='B')

>>> # The value of the next parameter is for a detector status.

... # The information is packed in a byte, and if extractab;e with suitable binary masks:

... # Bit7~Bit6 port status [01: port 1; 10: port 2; 11: port closed];

... # Bit5 processing using the main processir or a stand-by one [0: stand by; 1: main];

... # Bit4 PPS status [0: error; 1: normal];

... # Bit3~Bit0 reserved.

... valid_rule = {

... (0b11000000, 0b01): 'port_1',

... (0b11000000, 0b10): 'port_2',

... (0b11000000, 0b11): 'port closed',

... (0b00100000, 0b0): 'stand_by',

... (0b00100000, 0b1): 'main',

... (0b00010000, 0b0): 'error',

... (0b00010000, 0b1): 'normal',

... (0b00001111, 0b0): 'reserved'

... }

... v['d'] = NumericParameter(

... 0b01010110, 'valid rules described with binary masks', valid=valid_rule)

... # this returns the tested value, the rule name, the heiggt and width of every mask.

... v['d'].validate(0b01010110)

[(1, 'port_1', 8, 2),

(0, 'stand_by', 6, 1),

(1, 'normal', 5, 1),

(Invalid, 'Invalid')]

>>> # string representation. This is the same as v.toString(level=0), most detailed.

... print(v.toString())

======== ==================== ====== ======== ==================== ================= ====== =====================

name value unit type valid default code description

======== ==================== ====== ======== ==================== ================= ====== =====================

job waitress string None B UNKNOWN

birthday 1990-09-09T12:34:56. finetime None None was born on

789098

1031574921789098

a 3.4 None float (0, 31): valid 2.0 None rule name, if is "val

99: id", "", or "default"

, is ommited in value

string.

b alive (2019-02-19T01 finetime (0, 9876543210123456 1958-01-01T00:00: Q date param

:02:03.456789 ): alive 00.000099

1929229360456789) 99

c Invalid (Right) string '': empty cliche B str parameter. but on

ly "" is allowed.

d port_1 (0b01) None integer 11000000 0b01: port_ None None valid rules described

stand_by (0b0) 1 with binary masks

normal (0b1) 11000000 0b10: port_

Invalid 2

11000000 0b11: port

closed

00100000 0b0: stand_

by

00100000 0b1: main

00010000 0b0: error

00010000 0b1: normal

00001111 0b0000: res

erved

======== ==================== ====== ======== ==================== ================= ====== =====================

MetaData-listeners = ListnerSet{}

>>> # simplifed string representation, toString(level=1)

... v

job=waitress, birthday=1031574921789098, a=3.4, b=alive (1929229360456789), c=Invalid (Right), d=port_1 (0b01), stand_by (0b0), normal (0b1), Invalid.

>>> # simplest string representation, toString(level=2).

... print(v.toString(level=2))

job=waitress, birthday=FineTime(1990-09-09T12:34:56.789098), a=3.4, b=alive (FineTime(2019-02-19T01:02:03.456789)), c=Invalid (Right), d=port_1 (0b01), stand_by (0b0), normal (0b1), Invalid.

run tests

You can test sub-package dataset and utils with test1 and test5 respectively.

In the install directory:

make test1

make test5

test3 is for pns server self-test only

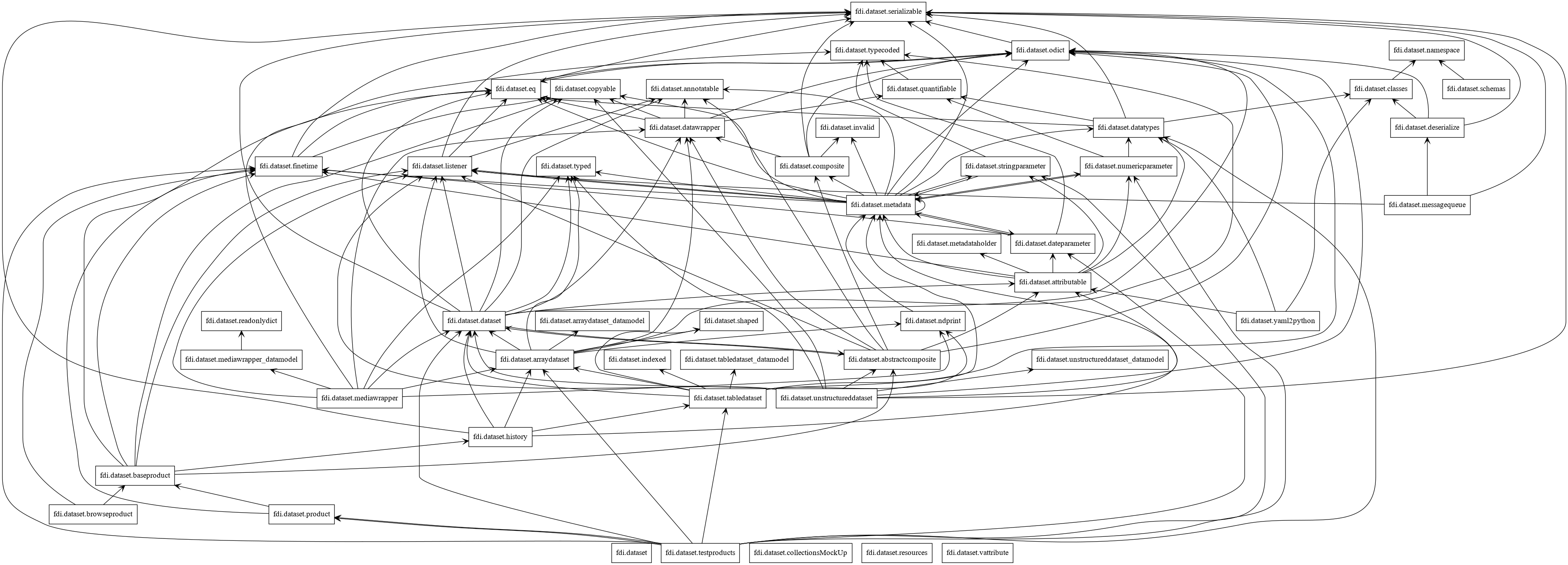

Design

Packages

Classes